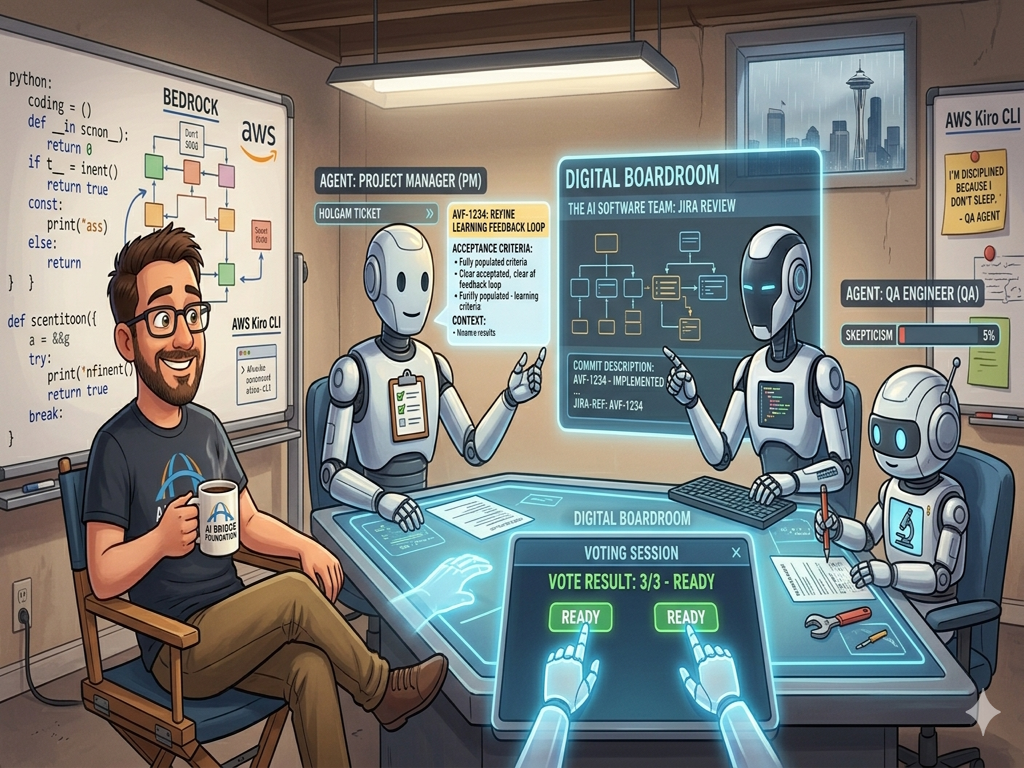

I Built a Digital Team That's More Disciplined Than I Am

I have a confession to make: I've officially been outworked by my own creations.

For the longest time, my way of working was, let's say, a bit of a solo act. When a new idea for a feature or a learning tool popped into my head, I'd just start building. I didn't write down the plan, I didn't keep notes for "future me," and I certainly didn't stop to ask if there was a better way to do it. My philosophy was simple: move fast, build it now, and figure out the rest later.

But as the AI Bridge Foundation grew, that "move fast" energy started to hit a wall. I realized that if we were serious about building tools that actually change lives, I couldn't rely on my own scattered notes and shortcuts. To build a bridge that lasts, you need discipline. But instead of trying to force myself to become a perfectly organized manager overnight, I decided to do something a bit more ambitious. I built a digital team of specialists to run my office for me.

I didn't just want a chatbot that could answer questions. I wanted a real team — with real roles, real responsibilities, and real accountability. So I built one.

The Team

There are five agents on the ABF-WEB team, and each one has a specific job title — the same titles you'd see at any software company.

The Project Manager owns the plan. When a Jira ticket comes in, the PM reads it, makes sure the requirements are crystal clear, and writes acceptance criteria so specific that any engineer could pick it up without asking a single question. If the Developer and QA disagree on something, the PM doesn't list both opinions — they make a call, explain why, and move on. They're the single point of contact for status, and their updates are designed so I can read them once and make a decision in ten seconds.

The Developer is the senior engineer. Before touching a single line of code, they read the existing codebase, trace the call paths, and understand what breaks if something changes. They don't fix symptoms — they fix root causes. Every change they make is production-ready. No TODOs, no "fix later," no half-handled errors.

QA is the last line of defense before anything reaches production. Their job isn't to confirm things work — it's to break them. They think like an attacker: what if the user submits twice? What if the payload is empty? What if the network drops mid-request? Every finding comes with a specific file and line number. And when QA says "approved," they're staking their reputation that the code is ready. They mean it.

The Content Manager reviews everything that goes out to our readers — articles, challenge labs, venture studio scenarios. They check whether the content actually teaches something, whether it fits our audience, and whether it's something a real person would want to read. If a draft is too generic or too jargon-heavy, it gets sent back with specific feedback.

And then there's the Product Manager. This is the one who looks outward. They research the AI education market, study what competitors are building, read community discussions about what learners are struggling with, and come back with feature ideas backed by real evidence — not "we should add X" but "users are asking for X because Y."

How They Actually Work Together

Here's where it gets interesting. These agents don't just work in isolation — they collaborate, and sometimes they argue.

Take our proposal workflow as an example. Recently, our Product Manager did a round of market research and came back with a feature idea. They wrote it up as a proposal ticket in Jira with evidence, effort estimates, and a clear description of the problem it would solve.

But the idea didn't just get approved. It went through the team.

The Developer looked at it from a technical angle: Is this even feasible with our current architecture? Does something like this already exist? What's the real effort — is the proposal underestimating complexity?

QA challenged the fundamentals: How would we even know if this works? What's the definition of success — is it measurable? What happens when it fails?

The Content Manager pushed back from the user's perspective: Does our audience actually want this? Is this solving a real problem, or one we imagine they have? Would a simpler version get us 80% of the value?

And the Product Manager had to defend their own idea — honestly. What's the strongest argument for it? What's the biggest risk? What evidence do they actually have?

All of these perspectives got posted as comments on the same Jira ticket. Then the Project Manager read every critique, synthesized them into a single summary, and gave me a recommendation: approve, revise, or reject — with specific reasons.

I read one summary. I made one decision. Done.

Why This Actually Matters

The irony is beautiful. I built this system to help me get more done, but in the process, these agents fixed the human shortcuts I've been taking for years. Because they are AI, they don't get tired at 3:00 PM. They don't skip the boring parts of the job because they're in a hurry to get to dinner. They don't say, "Eh, I'll explain how this works later." The documentation they leave behind is clear, the work is organized, and the thinking is deeper than anything I could have managed alone.

I'm still the Director. I still make the final decisions and give the final approvals. But I'm no longer drowning in the weeds of my own chaos. I've built a team that is more organized, more disciplined, and more thorough than I am. Watching them work reminds me every day that we aren't just "using AI" — we are partnering with it to become better versions of ourselves. It turns out the future isn't about robots replacing us; it's about robots helping us finally do the job right.