From Midnight Snack to Creative Giant: The Rise of Nano Banana

If you stepped into a time machine and traveled back just a couple of years, the phrase "Nano Banana" would likely conjure images of a genetically modified fruit or a particularly small snack. Fast forward to today, and it's the name of the most talked-about AI image engine on the planet.

But how did a tool with such a goofy name become the gold standard for digital art? And why is everyone—from professional designers to grandmas making birthday cards—obsessed with it? Grab a seat (and maybe a snack); we're peeling back the layers on the AI revolution's favorite fruit.

The 2:30 a.m. "Oops": A Name for the History Books

Most tech giants spend millions on branding agencies to come up with names like Axiom, Nexus, or Lumina. Google's most successful image model was named during a late-night caffeine haze.

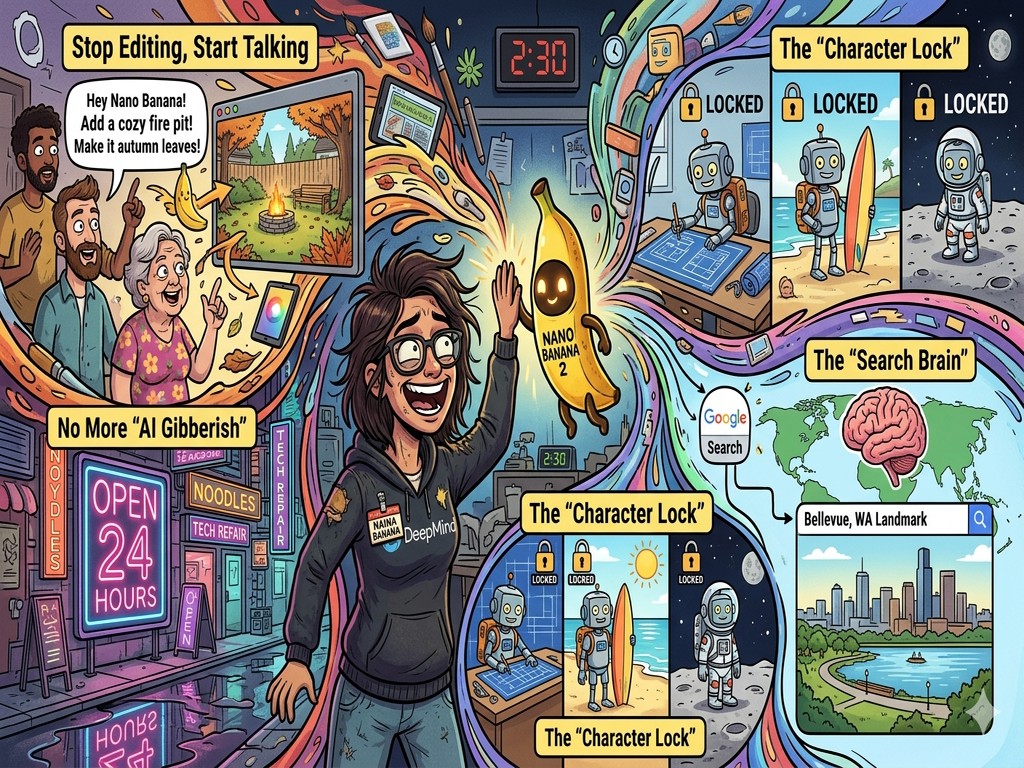

As the legend goes, Naina Raisinghani, a Product Manager at Google DeepMind, was working late into the night on a "Flash" version of their new image model. In a moment of sleep-deprived whimsy, she combined her own nickname ("Naina Banana") with the "Nano" architecture of the model.

When it was released for "blind testing" on platforms like LMArena, they kept the placeholder name to see if people liked the tech, not the brand. The results? People didn't just like the tech—they fell in love with the name. By the time the marketing team suggested a "serious" name, the internet had already spoken. Nano Banana was here to stay.

1. Stop Editing, Start Talking: The Death of the "Undo" Button

The first reason Nano Banana is a game-changer is how it handles edits. For decades, if you wanted to change something in a photo, you needed "The Knowledge." You needed layers, masks, clone stamps, and a $20-a-month subscription to professional software.

Nano Banana 2 introduced Conversational Editing. It treats you like a creative director, not a software operator. You can take a photo of your backyard and say, "Hey, can you add a cozy fire pit in the middle and make the grass look like it's autumn?" The "magic" isn't just that it adds a fire pit; it's that it understands physics and lighting. It knows that a fire pit should cast a warm orange glow on the nearby trees. It knows that autumn grass isn't just brown—it's a mix of gold and amber. It makes high-level photo manipulation as easy as sending a text message.

2. No More "AI Gibberish": The Spelling Bee Champion

If you've played with AI art before, you've seen the "cursed" text. You ask for a sign that says "Coffee Shop" and you get something that looks like ancient runes from a forgotten dimension.

Nano Banana 2 effectively "graduated" from spelling school. It's one of the first models that can render crisp, high-fidelity text in almost any font or style. Want a neon sign that says "Open 24 Hours" in a rainy cyberpunk alleyway? It'll get every letter right, including the reflection of the "N" in the puddles.

For the general public, this is massive. Small business owners are now using it to generate actual marketing flyers. Teachers are using it to create classroom posters. It has turned AI from a "cool toy" into a "functional tool" because, for the first time, you don't have to open a second app to fix the typos the AI made.

3. The "Character Lock": Your Personal Digital Cast

One of the biggest frustrations with early AI was "identity amnesia." If you generated a cool character—say, a brave little robot named Sparky—and then asked for a second picture of Sparky at the beach, the AI would give you a completely different robot.

Nano Banana solved this with Subject Consistency. It allows you to "lock" a character's appearance. You can define a person or an object, and the model will remember exactly what they look like across hundreds of different prompts.

This has birthed a new era of "Solopreneur" storytelling. People are writing entire children's books or creating consistent brand mascots without ever picking up a stylus. You can place your "locked" character in a snowy mountain, a bustling city, or a spaceship, and they will look like the same "person" every single time. It's like having a Hollywood cast that lives inside your computer.

4. The "Search Brain": AI Grounded in Reality

The final piece of the puzzle is Grounding. Most AI models are like students who studied for a test three years ago and then were locked in a room. They don't know what's happening now.

Because Nano Banana is built by Google, it has a "Search Brain." If you ask it to create an image of the newest electric car released last week, or a specific landmark in Bellevue, Washington, it doesn't have to guess. It can look at real-world data to make sure the proportions, colors, and details are factually accurate.

This makes it incredibly useful for "visualizing" things that are real. It bridges the gap between "fantasy art" and "functional imagery." Whether you're planning a home renovation or trying to explain a complex scientific concept, the AI is pulling from a library of real-world knowledge, not just random pixels.

Conclusion: The "Everyperson's" Creative Assistant

Nano Banana isn't just "good" because it makes pretty pictures. It's good because it lowered the "barrier to entry" for creativity. It took the power of a professional design studio and shrunk it down into a tool that responds to human conversation.

It's a reminder that sometimes the best technology doesn't have to feel cold and corporate. Sometimes, it can be a little bit "banana." It's fast, it's friendly, and it's finally making the dream of "art for everyone" a reality.

Whether you're using it to design a logo, illustrate a story for your kids, or just see what your dog would look like as an astronaut, Nano Banana 2 is proving that the future of AI isn't just about being smart—it's about being useful.